Most businesses aren’t ready for AI — not because of the tools, but because of what’s underneath them.

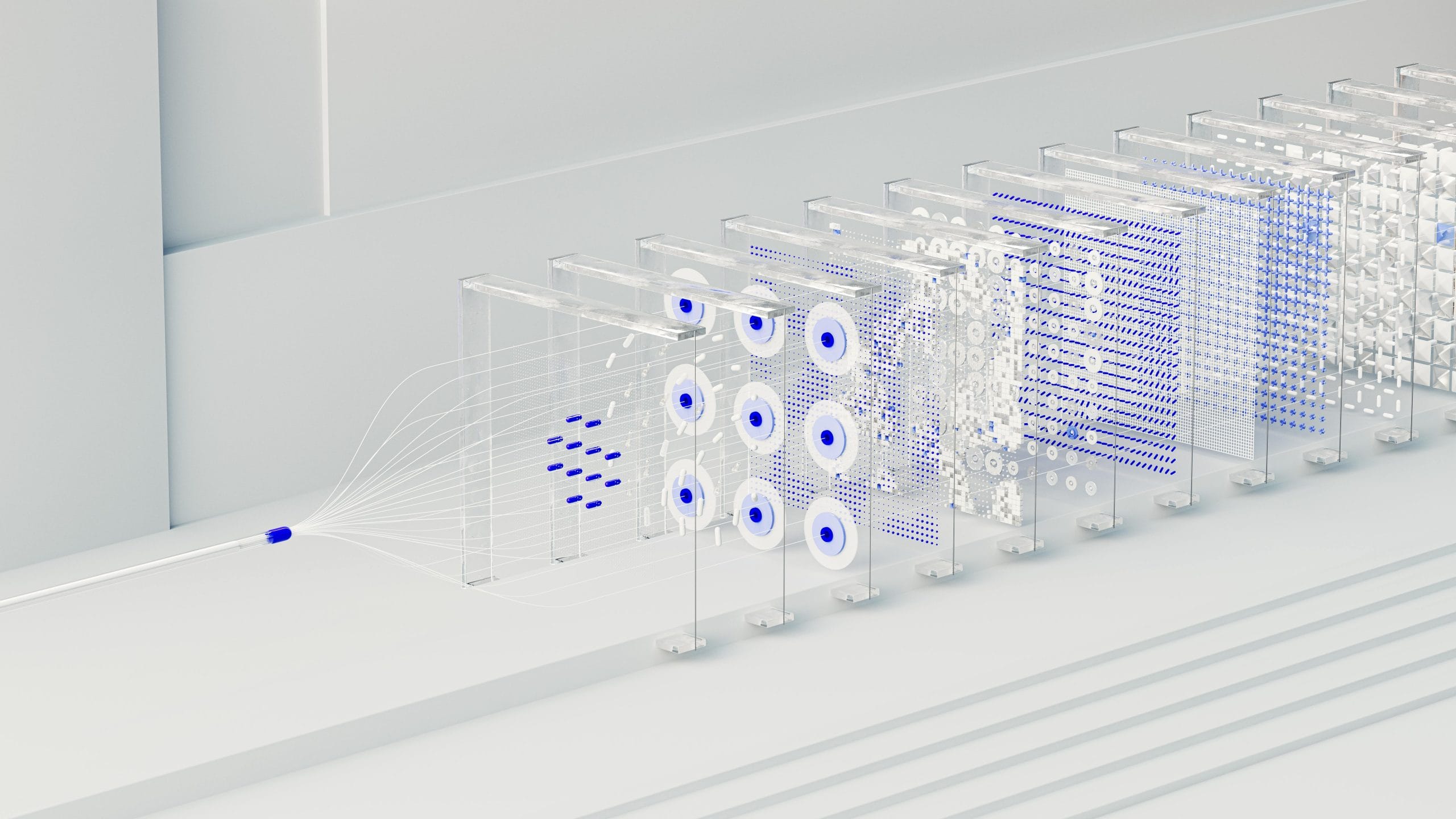

There’s a lot of excitement right now about AI-powered marketing tools. Smarter ad targeting. Automated campaign recommendations. Predictive customer insights. The pitch is compelling: feed your data in, get better decisions out.

The problem is the “feed your data in” part.

For many organizations — especially those running multiple locations, brands, or channels — the data going into these tools is fragmented, inconsistently tracked, and only partially accurate. And when that’s the case, AI doesn’t solve the problem. It amplifies it.

The Garbage In, Garbage Out Problem (Revisited)

This isn’t a new concept. But it takes on a different character in the AI era.

In the past, bad data meant bad reports. A dashboard showed the wrong number. Someone noticed. You fixed the query. The feedback loop was visible.

With AI-powered tools, the feedback loop is harder to see. The tool produces a recommendation that sounds authoritative — because it’s framed with confidence. Your team acts on it. Results disappoint. And no one immediately thinks to ask: was the underlying data even right?

We’ve seen this play out in a specific way with multi-location businesses. Consider a restaurant group running five or more locations. Each location may have been set up at a different time, by a different vendor, with a slightly different tracking configuration. Some fire GA4 events correctly. Some don’t. Meta’s Pixel may be live on the main site but missing from location-specific landing pages. The Conversions API might be sending duplicate events — or none at all.

From the outside, the dashboards look populated. There’s data. Charts exist. Reports run.

But when an AI tool ingests that data to make recommendations about where to spend ad budget, which locations to prioritize, or which campaigns to scale — it’s working from a distorted picture. The outputs feel actionable. They just aren’t accurate.

What This Looks Like in Practice

Here’s a realistic scenario.

A multi-location operator connects their ad platform data to an AI-powered analytics tool. The tool analyzes performance across locations and recommends doubling down on Location C, which shows the highest attributed conversion rate.

What the tool doesn’t know:

- Location C’s tracking was reconfigured three months ago, which reset its conversion history

- Locations A and B have a known double-counting issue that inflates their event counts

- Two locations aren’t sending any offline conversion data at all

The recommendation isn’t wrong because the AI is bad. It’s wrong because the data it analyzed was wrong. The model did exactly what it was designed to do — it just had nothing reliable to work with.

This is the AI readiness gap. And it’s more common than most teams realize.

Why Fragmented Tracking Is So Hard to Catch

For single-location businesses, tracking issues are relatively contained. You have one website, one funnel, one set of events to validate.

Multi-location operators face a different problem. The same analytics setup gets replicated — or improvised — across dozens of locations. Implementations drift. Updates get applied inconsistently. New channels get added without being fully integrated into the existing structure.

No one sets out to build fragmented tracking. It accumulates gradually, often invisibly, as the business grows.

By the time a team starts exploring AI-powered tools, the tracking foundation is rarely examined. The assumption is that the data is there, so it must be usable. That assumption is worth pressure-testing before any AI layer is added on top.

AI Readiness Starts With Data Trust

Getting ready for AI isn’t primarily a technology problem. It’s a data quality problem.

The organizations that will get the most out of AI-powered tools are the ones that have already done the foundational work:

- Consistent event tracking across all locations and channels, not just the flagship site

- Validated conversion data that accurately reflects actual customer actions

- Clean, deduplicated signals flowing into ad platforms and analytics tools

- A shared definition of key metrics so the AI is optimizing for what the business actually cares about

This doesn’t require a massive infrastructure overhaul. It requires a structured audit of what’s actually being tracked, what’s missing, and what’s producing noise instead of signal.

Once that foundation is in place, AI tools can do what they’re designed to do. The recommendations become trustworthy because the inputs are trustworthy.

A Better Sequence

If you’re evaluating AI-powered marketing or analytics tools — or already using them and wondering why the recommendations don’t feel right — the most valuable thing you can do first isn’t to upgrade the tool.

It’s to pressure-test the data underneath it.

That means walking through your current tracking setup with fresh eyes: What’s firing? What’s missing? What’s being counted more than once? What’s been broken since that site redesign six months ago?

It’s not a glamorous project. But it’s the one that makes everything else work.

Ready to Pressure-Test Your Data?

DataNicely helps multi-location businesses audit and rebuild their tracking infrastructure — so the data feeding your decisions, dashboards, and AI tools is actually reliable. If you’re not confident in your numbers, let’s talk.